2016 MIT SSAC Soccer Analytics Panel: Good intentions, so-so execution

Categories: Conferences and Symposia

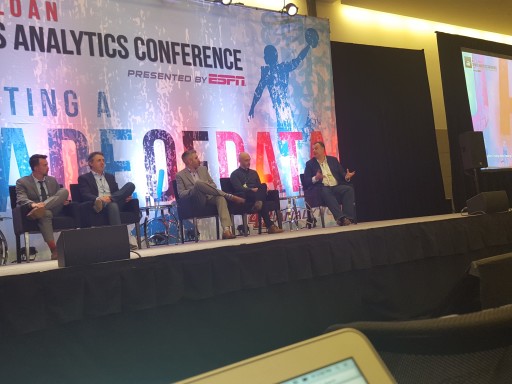

Introducing the Soccer Analytics panel at 2016 MIT Sloan Sports Analytics Conference. L-R: Student hostess, Andrew Weibe, Devin Pleuler, Chris Anderson, Paul Carr, Blake Wooster. Not pictured: Gabriele Marcotti.

As I said Thursday night, I come to these conference panel discussions with low expectations. With competitors and journalists in the audience, there is very little chance of hearing candid discussions by participants, with the exception of Mark Cuban. I’ve had some time to collect my thoughts about yesterday’s Soccer Analytics panel, and my overall impression is that while the intent of the panel was good, the discussion didn’t flow very well in my opinion.

Returning to the panel as moderator was MLSSoccer.com’s Andrew Weibe, and he was joined by Devin Pleuler (Toronto FC), Chris Anderson (Coventry City FC), Paul Carr (ESPN), Blake Wooster (21st Club), and Gabriele Marcotti (everywhere).

First of all, I strongly disliked the layout of the panel on the stage:

With an even number of panelists, the placement of the end tables would not have been an issue. But with an odd number of panelists like this session, the tables had an effect of creating a literal odd man out in Gabriele Marcotti. As a member of the audience, I felt that Marcotti was more or less a detached observer to the panel session. When I look at my session notes, however, Marcotti was called upon about 20% less often than Pleuler, Anderson, and Wooster, yet Paul Carr was called upon even less often than him.

As for the discussion, the panel hit upon the following topics:

- Changes in attitude in the soccer industry over the last 12 months: The biggest change over the last 12 months has been the movement of leading analysts, whether bloggers, self-employed, or employees of the data companies, transitioning to important positions in football clubs. And they aren’t being given roles as analysts and shunted off to the side, these are positions of real responsibility. Among the public, there is a greater appetite for analytical content. That appetite remains concentrated among a subset of sports fans, but it’s not a small subset. Marcotti made the important point that the term “analytics” still encompasses an overly broad category of quantitative information, much of it not useful. Pleuler said that clubs are more interested in analytics than before, but the topic remains unchartered territory for them.

- The challenges of finding qualified analysts: Wooster mentioned the importance of finding analysts with emotional intelligence as well as conventional intelligence and technical aptitude. I did like hearing someone talk about the importance of being able to write one’s own software code. To me this challenge is akin to the broader challenge of finding qualified data scientists (sports analysts being a very specific subset).

- Building trust between analysts and decisionmakers: This is similar to what has been discussed in other sports — an analyst has to produce enough findings that validate what decisionmakers already “know” before they are willing to accept findings that are non-intuitive or challenge conventional wisdom. Communication is everything, as well as the ability to ask the right questions to produce the relevant answers (Wooster hit this point hard). Pleuler emphasized the trust issue, as did Carr and Anderson.

- The issue of data availability: This is the thorny issue of public versus proprietary data. Compared to previous years in which panelists expressed frustration with lack of access to data, the panelists to a man (except Marcotti) stated that this was less of an issue. Wooster said that it’s more important for a prospective analyst to develop a compelling use-case to access data. Anderson expressed that one can create actionable information from simple data (and he’s written a book to prove it), and the real challenge is compiling the data from multiple sources and connecting data to operational decision-making.

- Simple versus complex models: In Pleuler’s experience, if a model is too complex to be explained to a decisionmaker, it is too complex to be useful. The emphasis is on simple models and graphics-heavy visualizations of data and analytical information. The other challenge is expressing analytical knowledge in the phraseology of football language. Wooster gave a great example of Tim Sherwood (former manager of Tottenham Hotspur), who stated that expected goals were “rubbish” yet in multiple post-match interviews complained that his side wasn’t getting the results they deserved despite creating better chances. But that’s a succinct explanation of expected goals!

- Identifying players of interest: As Pleuler said, “If you’re signing a player solely on analytics, you’re doing it wrong”

A significant amount of the conversation was devoted to expected goals models. All of the panelists felt that it was an important metric that has gained wide appeal in the broader football public because of its relative simplicity of construction. It also serves as a proxy for other unobservables such as over/underperformance of players and teams, and ultimately expected market value. Some of the panelists expressed reservations with the model, in particular Pleuler who stated issues with bias, overvaluation of attacking play, and undervaluation of audacious plays (he gave Carli Lloyd’s goal from midfield in the Women’s World Cup final as an example).

There were other topics covered in the audience question period, such as Leicester City’s title run (Anderson: excellent recruitment policy, smart spending, a level-headed manager, a career season by Vardy, but perhaps a one-off phenomenon), long-term plans for club investment (Wooster: legal and financial matters not only important, but the playing squad also impacts value and is not easily quantified), analysis of attacking versus defensive play (Pleuler: not as simple as inverting attacking behaviors into defensive), and what’s next for the next 12 months (Marcotti: transparency in transfer system and club payments would have a huge impact of ability to value players/clubs accurately).

When I look back at the session, there was a lot more content than I recognized. I think the session would have benefited from more spontaneous interaction between the panelists, but it seemed that everyone was sticking to their lanes. Perhaps the presence of a true independent blogger who is knee-deep in analytics would be valuable for interacting with the other panelists (the Aaron Schatz effect).

So in summary, this year’s Soccer Analytics panel wasn’t a step down from last year, but it wasn’t a massive step up, either.